Tokenization

Tokenization is a highly effective way to protect sensitive data. When you tokenize data, sensitive data elements are substituted with randomly generated non-sensitive data elements.

Examples of sensitive information that are suitable for tokenization protection include:

-

Primary Account Number (PAN)

-

Personally Identifiable Information (PII)

-

Protected Health Information (PHI), or any information deemed sensitive

Format-Preserving Tokens

Anypoint tokenization creates format-preserving tokens, which means the output tokens have the same format as the sensitive data input. Format-preserving tokenization ensures that changes are not required for existing enterprise data flows or data stores because the generated tokens conform to the existing data structure and validations. This method minimizes changes to existing applications compared to point-to-point encryption, which requires data protection implementation at each point.

Making a detokenization call to the tokenization service returns the identity of a tokenized value.

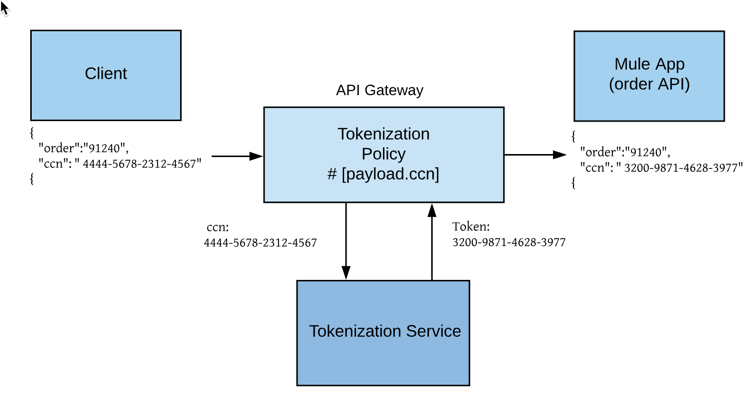

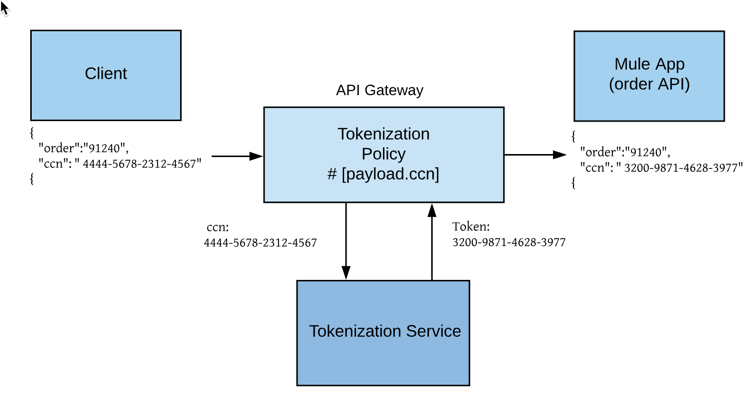

In this example, a tokenization format is created with the credit card data domain and assigned to the tokenization service. A tokenization policy is applied to the API gateway.

When a payload that contains credit card numbers in the payload is sent, the request is redirected to the API gateway that has the tokenization policy applied.

A connection is established between the API gateway and the tokenization service. The tokenization service receives the credit card information, transforms the data into tokens, and returns the tokenized data to the API gateway.

The API gateway replaces the credit card number with the tokenized data and the request is sent to the app.

Masking

Anypoint Security also provides masking which is another tokenization option that can be used to hide or mask identity. When using masking, a configurable mask character is returned which hides the identity of the sensitive data.

In the above example, using a masking format that preserves the last four characters returns the token ######--4567. You can’t return the identity of a masked token via a detokenization call to the tokenization service.

|

If you configure a policy in API Manager to detokenize and you select a masking format, the API gateway returns a |

Prerequisites

To configure and use the tokenization service, you must:

-

Meet all the prerequisites and requirements for running Anypoint Runtime Fabric on VMs/Bare Metal. Runtime Fabric on Self-Managed Kubernetes does not support Tokenization.+ If you don’t see Security in Management Center or the Tokenization Service tab in Runtime Manager, contact your customer success manager to enable the tokenization service for your account.

-

Have the correct permissions to manage tokenization.

See Granting Tokenization Permissions. -

Have Runtime Fabric on VMs/Bare Metal with inbound traffic configured.

See the Runtime Fabric documentation.

Runtime Fabric requires the Anypoint Integration Advanced package or a Platinum or Titanium subscription to Anypoint Platform. -

Have a tokenization format, which describes the format and how data is tokenized.

See Tokenization Formats.

Tokenization Benefits

The Tokenization service provides many benefits:

-

Vaultless operation:

-

Sensitive data is not stored in the tokenization deployment.

-

Data growth is not based on the number of transactions processed or tokens issued, which removes data-vault management issues.

-

-

Increased security:

-

Sensitive information is protected to reduces your sensitive data zones.

-

The number of systems and applications that process, store, or transmit sensitive information are reduced or removed from sensitive data scopes.

-

The chances of a data breach from auxiliary applications and data stores are negated, as the sensitive information stored in them is replaced by tokens.

-

Defense in depth is increased for sensitive data and least privilege. Authorization is required for the tokenization service to detokenize the sensitive data back to its actual value. Possession of the token alone provides no extrinsic or exploitable meaning or value.

-

-

Flexibility:

-

You can choose a tokenization strategy appropriate for your business using inbuilt data domains such as PAN, SSN, email, decimal, ascii and so on.

-

Each domain has configurable options for preserving portions of the original sensitive data and replacing the rest with token data, or replacing the entire information with a token.

-

Tokenization operates seamlessly across wide area networks and distributed data centers.

-

-

Performance:

-

High-performance operation ensures low-latency processing.

-

Performance scales linearly on each node with vertical and horizontal scaling for vaultless tokenization provided by the Anypoint Platform.

-