-

US control plane:

transport-layer.prod.cloudhub.io -

EU control plane:

transport-layer.prod-eu.msap.io

Runtime Fabric on VMs / Bare Metal Installation Prerequisites

Before installing Runtime Fabric on VMs / Bare Metal, review the information in this topic to ensure your environment is ready for installation.

Before You Begin

| Most installation issues on Runtime Fabric on VMs / Bare Metal result from improper infrastructure provisioning or configuration. |

Before you install Runtime Fabric on VMs / Bare Metal:

-

Refer to Shared Responsibility for Runtime Fabric on VMs / Bare Metal.

If your infrastructure does not meet the minimum hardware, operating system, and networking requirements, Runtime Fabric cannot operate successfully.

-

Runtime Fabric on VMs / Bare Metal requires tasks to be performed by the following members of your organization:

-

DevOps team to provision and manage the infrastructure

-

Network team to add ports to allowlists and configure proxy settings

-

Security team to review for compliance and obtain security certificates

Working with these teams in your organization ensures a successful installation and avoids performance and density issues.

-

-

Review the requirements in this topic and ensure that all prerequisites are met before installing Runtime Fabric on VMs / Bare Metal.

The installation process fails if the minimum requirements are not met.

See Anypoint Runtime Fabric on VMs / Bare Metal for a description of development and production configurations.

If needed, contact your MuleSoft representative for assistance.

General Installation Requirements

Knowledge in the following areas is required to install Runtime Fabric on VMs / Bare Metal:

-

Linux system commands

-

Working in Linux with root access. Installation requires root access (permission to run the

sudocommand) on all servers in your environment -

If running on Amazon Web Services (AWS), working with Terraform

-

Remote connection mechanisms, such as SSL and SSH

Ensure that you plan for:

-

Creating separate runtime fabrics for production and non-production applications

-

Creating separate runtime fabrics based upon environment network scope, such as DMZ versus private network

-

Ensuring that the runtime fabric default pod and services CIDR block do not interfere with the regions network

Anypoint Platform Roles and Permissions

To successfully use Runtime Fabric, your Anypoint Platform account must have the following roles and permissions enabled:

-

To deploy and manage applications, you must have the ability to use Anypoint Runtime Manager. To deploy applications, you must also have the Exchange Contributors role enabled for your Anypoint Platform account.

-

To manage permissions for Anypoint Platform users, you must have the ability to use Anypoint Access Management.

-

To use Runtime Fabric, you must have the Organization Administrators role or the Manage Runtime Fabrics permission on the corresponding environments.

-

To delete Runtime Fabric instances, administrators need the Manage Runtime Fabrics permission at the organization level.

Infrastructure Requirements

Perform the following actions:

-

Disable swap memory.

-

Ensure that chrony (system clocks) status is in sync.

-

Use of certain third-party software packages, such as antivirus or deep packet inspection (DPI) software, may impact certain functionalities of the Runtime Fabric appliance. Refer to How Antivirus and DPI Software Impacts Runtime Fabric Functionality for more information.

-

Either provision the required hardware or have enough quota with your infrastructure provider to create the required hardware:

-

x86 or x64 architecture. Only x86 and x64 architectures are supported.

-

Virtual machines

-

Disks with provisioned IOPS

-

Virtual networks

-

Firewall rules and security groups

-

Load balancers

-

-

If provisioning hardware with AWS or Azure, you must have the correct permissions to create the required resources.

Dedicated disks are required to ensure optimum performance. The Runtime Fabric on VMs / Bare Metal installer formats and mounts the disks needed during installation. The default behavior of the AWS and Azure provisioning scripts creates a set of virtual machines and disks defined by the minimum requirements. It also creates a private network with the required network ports configured. This is optimal when evaluating Runtime Fabric on VMs / Bare Metal before integrating within your primary network. You should consult with your network administrator to determine if the default behavior is compatible. You might need to modify the provisioning scripts to accommodate your organization’s requirements.

-

Understand how to forward logs to your organization’s logging provider.

-

Obtain your organization’s SMTP provider information so that you can configure alerts.

-

Verify the following network items:

-

Outbound connections are routed to a proxy.

-

Outbound access is allowed using websockets (via port 443) to Anypoint Platform.

-

Your organization’s network and security policies, including:

-

The internal network IP address range in CIDR notation

-

The subnet range used for Runtime Fabric in CIDR notation

-

If virtual machines are allowed, public IP addresses

-

How to gain shell access to each VM

-

-

-

Based on the function of your organization’s Mule applications, determine if inbound traffic should be enabled for Runtime Fabric on VMs / Bare Metal.

If you plan to enable inbound traffic, evaluate your network’s traffic to determine whether you need to configure dedicated nodes for the inbound load balancer. For additional information, refer to Network Configuration and Internal Load Balancer.

Operating System Requirements

Use the same operating system for each Runtime Fabric node. If you attempt to install Runtime Fabric on VMs / Bare Metal on different operating system versions or distributions, the installer fails.

Runtime Fabric on VMs / Bare Metal requires one of the following operating systems:

-

Red Hat (RHEL)

-

v7.4 - v7.9

-

v8.0 - v8.3

-

v8.4 (using Runtime Fabric Appliance version 1.1.1636064094-8b70d2d and later)

-

v8.6 (using Runtime Fabric Appliance version 1.1.1660253001-ab8f5e5 and later)

-

-

CentOS

-

v7.4 - v7.9

CentOS 8 has reached end-of-life. Consider using an alternative operating system.

-

-

Ubuntu v18.04 (using Runtime Fabric Appliance version 1.1.1625094374-7058b20 or later)

-

Ubuntu 20.04

|

There is a known control groups (cgroups) memory leak issue which can lead to pod creation failure or node malfunction in RHEL and CentOS 7. See the Knowledge Base Article for additional information. To avoid this issue, use RHEL or CentOS 8.x in your Runtime Fabric on VMs / Bare Metal installation. |

Network and Port Requirements

Runtime Fabric on VMs / Bare Metal requires specific network and port configuration for installation and operation.

|

Runtime Fabric on VMs / Bare Metal does not support cross-regional deployments. Network latency between each node can cause problems with cluster health. |

Bandwidth Requirements

A high bandwidth network is required, with a minimum of 1 GBps connectivity between each node operating Runtime Fabric on VMs / Bare Metal, and minimum of 100 MBps for outbound connectivity to the Internet.

For an example of the traffic volume between Runtime Fabric and the Anypoint control plane, consider the following:

-

The image for Mule Runtime 4.3.0 is 740 MB.

-

An Runtime Fabric appliance upgrade requires 1.5 GB. The appliance upgrade process takes approximately 30 minutes for a production size cluster (3 controllers and 3 workers).

Required Port Settings

The following sections list the TCP and UDP network port requirements for Runtime Fabric on VMs / Bare Metal.

Ports Used During Installation

The following table lists the ports that must be accessible on all nodes. These ports are used for internal communication between nodes during installation. After completing the installation, you can safely disable these ports.

| Port | Protocol | Description |

|---|---|---|

4242 |

TCP |

Bandwidth checker |

61008-61010 |

TCP |

Used during installation |

61022-61024 |

TCP |

Installer agent ports |

Port Used by the Persistence Gateway

The Persistent Gateway requires a Postgres-compliant database to store persistent data across Mule application replicas. Ensure that your Kubernetes cluster has access to this database and port. See Persistence Gateway.

Network TCP and UDP Ports

The following table lists all ports that must be open, along with the respective protocols used and the request origin and destination:

| Port | Layer 4 Protocol | Layer 5 Protocol | Source | Destination | Description |

|---|---|---|---|---|---|

123 |

UDP |

NTP |

All nodes |

Internet |

Clock synchronization via Network Time Protocol |

443 |

TCP |

HTTPS |

API consumers |

Controller nodes |

Allow inbound API requests to ingress controllers |

443 |

TCP |

AMQP over WebSockets |

Controller nodes |

Internet |

Anypoint Platform management services |

443 |

TCP |

HTTPS |

All nodes |

Internet |

API Manager policy updates, API Analytics Ingestion, and Resource retrieval (application files, container images). |

443 (v1.8.50, or later) |

TCP |

Lumberjack |

All nodes |

Internet |

Anypoint Monitoring, Anypoint Visualizer |

5044 (deprecated) |

TCP |

Lumberjack |

All nodes |

Internet |

Anypoint Monitoring, Anypoint Visualizer This port and hostname are deprecated in Anypoint Runtime Fabric, version 1.8.50 and later. If you are using a previous version of Anypoint Runtime Fabric you must add this port and hostname to your allow list. If you are using a newer version, use the port and hostname specified above. This is applicable to endpoints in both the US and EU clouds. |

53 |

TCP |

DNS |

localhost |

localhost |

Internal cluster DNS |

2379, 2380, 4001, 7001 |

TCP |

HTTPS |

All nodes |

Controller nodes |

etcd server communications |

3008, 3010, 3011, 3012 |

TCP |

HTTPS / gRPC |

All nodes |

All nodes |

Anypoint Runtime Fabric internal services |

3009 |

TCP |

HTTPS |

All nodes |

Internal network |

Anypoint Runtime Fabric internal Ops Center |

3022 - 3025 |

TCP |

SSH |

All nodes |

All nodes |

Internal SSH control services |

3080 |

TCP |

HTTPS |

All nodes |

Controller nodes |

Anypoint Runtime Fabric internal Ops Center |

5000 |

TCP |

HTTPS |

All nodes |

Controller nodes |

Internal container registry |

6060 |

TCP |

HTTPS / gRPC |

All nodes |

All nodes |

Anypoint Runtime Fabric internal services |

6443, 8080 |

TCP |

HTTPS / HTTP |

All nodes |

Controller nodes |

Kubernetes API server |

7373 |

TCP |

RPC |

localhost |

localhost |

Serf RPC for peer-to-peer connectivity |

7496 |

TCP |

HTTPS |

All nodes |

All nodes |

Serf (health checking agent) / Peer-to-peer connectivity |

7575 |

TCP |

gRPC |

All nodes |

All nodes |

Cluster status API |

10248-10250 |

TCP |

HTTPS |

All nodes |

All nodes |

Kubernetes components |

10255 |

TCP |

HTTPS |

All nodes |

All nodes |

Kubernetes components |

30000-32767 |

TCP |

Application-specific |

All nodes |

All nodes |

Internal services port range used to route requests to applications |

32009 |

TCP |

HTTPS |

Internal network |

Controller nodes |

Anypoint Runtime Fabric Ops Center |

32009 |

TCP |

HTTPS |

Controller nodes |

All nodes |

Ops Center internal API |

53 |

UDP |

DNS |

localhost |

localhost |

Internal cluster DNS |

7496 |

UDP |

HTTPs |

All nodes |

All nodes |

Serf (health checking agent) / Peer-to-peer connectivity |

8472 |

UDP |

VXLAN |

All nodes |

All nodes |

Overlay network |

30000-32767 |

UDP |

Application-specific |

All nodes |

All nodes |

Internal services port range used to route requests to applications |

Port IP Addresses and Hostnames to Add to the Allowlist

In your network configuration, you must add the hostnames and ports of Anypoint Platform components and services to allowlists to enable Anypoint Runtime Fabric to communicate with them. This is also required to download dependencies during installation and upgrades.

The following table lists the ports and hostnames to add to your allowlists to allow communication between Anypoint Runtime Fabric and Anypoint Platform.

|

The following table does not include ports and hostnames from API Manager that you may also need to allow. Refer to the API Manager documentation for a list of additional ports and hostnames. |

Because the following endpoints use mutual TLS authentication to establish the connection, you must configure SSL passthrough to allow the following certificate:

-

US control plane

-

transport-layer.prod.cloudhub.io -

configuration-resolver.prod.cloudhub.io

-

-

EU control plane

-

transport-layer.prod-eu.msap.io -

configuration-resolver.prod-eu.msap.io

-

| Port | Protocol | Hostnames | Description |

|---|---|---|---|

443 |

AMQP over WebSockets |

Runtime Fabric message broker for interaction with the control plane. |

|

443 (v1.8.50, or later) |

TCP (Lumberjack) |

|

Anypoint Monitoring agent for Runtime Fabric. |

443 |

HTTPS |

|

Anypoint Platform for pulling assets. |

443 |

HTTPS |

|

Kubernetes base charts repository. |

443 |

HTTPS |

|

Kubernetes base charts repository. |

443 |

HTTPS |

|

Runtime Fabric version repository. The Runtime Fabric installation uses software from this repository during installation and upgrades. |

443 |

HTTPS |

|

Anypoint Exchange for application assets. |

443 |

HTTPS |

|

Anypoint Exchange for application files. |

443 |

HTTPS |

|

Runtime Fabric Docker repository. |

443 |

HTTPS |

|

Runtime Fabric Docker repository. |

443 |

HTTPS |

|

Runtime Fabric Docker repository. |

443 |

HTTPS |

|

Runtime Fabric Docker image delivery. |

443 |

HTTPS |

|

Runtime Fabric Docker repository. |

443 |

HTTPS |

|

Anypoint Configuration Resolver. |

Required Network Settings

In addition to the previous port requirements, the following network settings are required to operate Runtime Fabric on VMs / Bare Metal:

-

A subnet with enough IP addresses allotable to add additional VMs to Runtime Fabric on VMs / Bare Metal

The pod CIDR block must not overlap with IP addresses that pods or servers use to communicate. If services within the cluster or services that you installed on nodes need to communicate with an IP range that overlaps the pod or service CIDR block, a conflict can occur. If a CIDR block is in use, but pods and services do not use those IP addresses to communicate, there is no conflict. If you deploy more than one cluster, each cluster can reuse the same IP range, because those addresses exist within the cluster nodes, and cluster-to-cluster communications is relayed on the external interfaces. -

Shell/SSH access to each VM used to install Runtime Fabric on VMs / Bare Metal

-

Static private IPv4 addresses assigned to each VM, which persist between restarts

-

Kernel IP forwarding to enable internal Kubernetes load balancing. IPv4 forwarding is enabled on each VM during installation.

-

Bridge-netfilter to enable the host Linux kernel to translate packets to and from hosted containers. This kernel module is enabled on each VM during installation.

-

Access to an SMTP server to manage e-mail alerts triggered by Runtime Fabric on VMs / Bare Metal

-

An external load balancer to balance external requests to the internal load balancer running on each controller VM

-

Your environment’s HTTP proxy configuration if outgoing requests to the Internet must route through a proxy

-

A SOCKS5 proxy for Anypoint Monitoring and Visualizer support if outgoing requests to the Internet must route through a proxy

| If you’re using VMWare and are unable to connect to your existing applications or deploy new applications refer to RTF Networking Issues with VMware in the MuleSoft Knowledge Base. |

Mule License

To deploy Mule applications and API proxies, you must have access to your organization’s Mule license file.

Installation Requirements

During installation, the installation package is downloaded to a controller VM that serves as the leader of the installation.

Runtime Fabric on VMs / Bare Metal is configured to run as a cluster across multiple virtual machines. Each VM operates as one of the following roles:

-

Controller VM, which is dedicated to operate and run Runtime Fabric services. The internal load balancer runs within controller VMs.

-

Worker VM, which is dedicated to run Mule applications.

One controller VM acts as a leader during the installation. This VM downloads the installer and makes it accessible to each other VM on port 32009. Other VMs copy the installer files from the leader, perform the installation, and join the leader to form a cluster.

During the installation, a set of pre-flight checks run to verify the minimum hardware, operating system, and network requirements for Runtime Fabric. If these requirements are not met, the installer fails.

The installation process combines the following steps:

-

For AWS and Azure, provisions infrastructure per the system requirements

-

Installs Runtime Fabric across the VMs

-

Activates Runtime Fabric with your Anypoint organization

-

Installs your organization’s Mule Enterprise license

To complete the previous steps, specify environment variables for each VM at the beginning of installation. The leader requires additional variables to activate and add the Mule license. A script runs on each VM to perform the following actions:

-

Format and mount each dedicated disk.

-

Add mount entries for each disk to

/etc/fstab. -

Add iptable rules.

-

Enable required kernel modules.

-

Start the installation.

The controller VM that is the leader for installation performs the following actions:

-

Runs the activation script after installation.

-

Runs the Mule license insertion script after registration.

Production Configuration Requirements

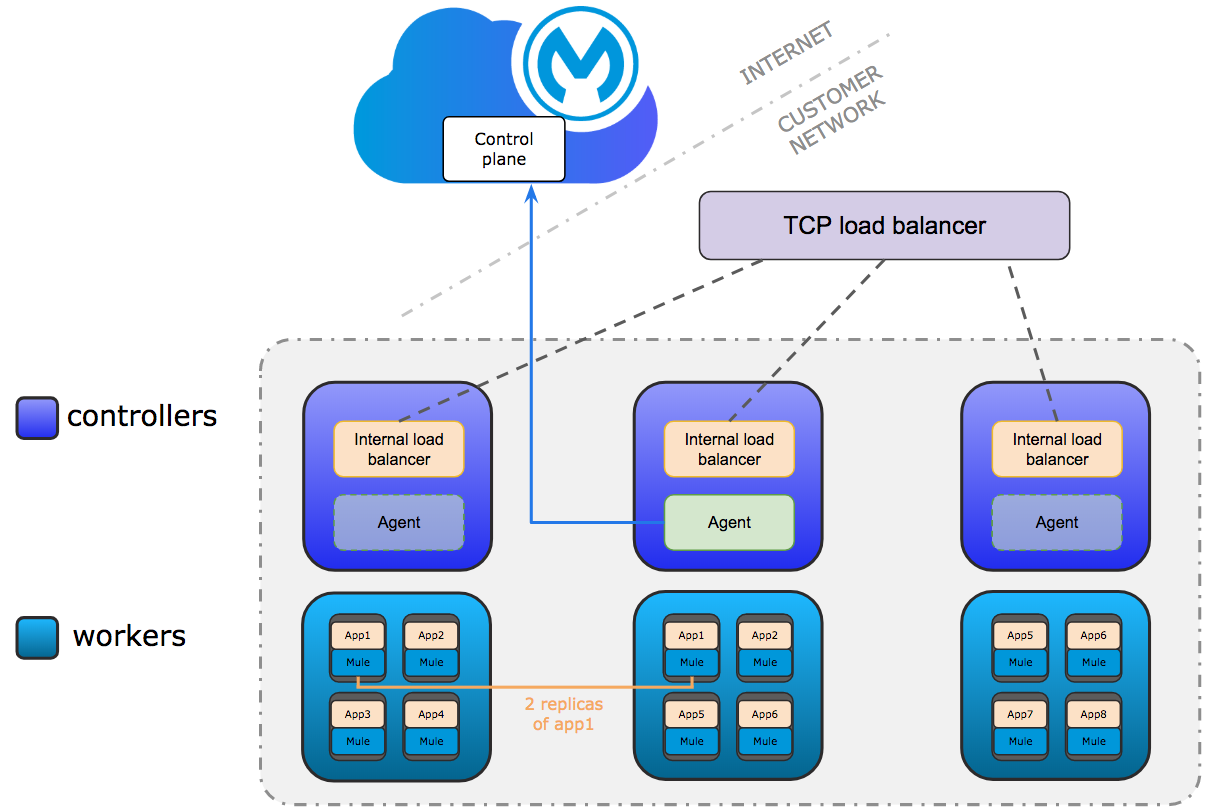

A minimum of 6 nodes are required for a production configuration of Runtime Fabric on VMs/ Bare Metal, as shown in the following diagram.

The following sections describe the minimum system requirements of controller and worker nodes in a production configuration.

| Runtime Fabric on VMs / Bare Metal does not support running controller nodes using burstable VMs. When deploying an application on a worker node, you can allocate vCPU for bursting. See Resource Allocation and Performance on Anypoint Runtime Fabric for additional information. |

Controller Nodes

The minimum number of controller nodes required for a production environment is three. This requirement reflects the minimum requirements to maintain high-availability and ensure system performance.

An odd number of controller nodes is required for fault tolerance. Even numbers of controller nodes are not supported.

The maximum number of supported controller nodes is 5.

Each controller node must have the following resources:

-

A minimum of 2 dedicated cores.

-

A minimum of 8 GiB memory.

You must consider the amount of resources needed for the internal load balancer when sizing controller nodes. For additional information, refer to Internal Load Balancer resource allocation.

-

A minimum of 80 GiB dedicated disk for the operating system.

-

A minimum of 20 GiB for

/tmpdirectory. The/tmpdirectory needs read, write, and executable permissions. -

A minimum of 8 GiB for

/opt/anypoint/runtimefabricdirectory. -

A minimum of 1 GiB for

/var/log/directory.

-

-

A minimum of 60 GiB dedicated disk with at least 3000 provisioned IOPS to run the etcd distributed database. This translates to a minimum of 12 Megabytes per second (MBps) disk read/write performance.

-

This disk is referred to as the

etcddevice.

-

-

A minimum of 250 GiB dedicated disk with 1000 provisioned IOPS for Docker overlay and other internal services. This translates to a minimum of 4 MBps disk read/write performance.

-

This disk is referred to as the

dockerdevice.

-

Scaling Considerations

Consider scaling the number of controller nodes in the following situations:

-

Fault tolerance is needed to mitigate the impact of controller node hardware failure. The minimum requirement of 3 controller nodes enables fault-tolerance of losing 1 controller. To improve fault-tolerance to lose 2 controllers, a total of 5 controllers should be configured.

-

Production traffic is being served with applications running on Runtime Fabric on VMs / Bare Metal.

Worker Nodes

Runtime Fabric on VMs / Bare Metal requires at least three worker nodes to run Mule applications and API gateways.

The maximum number of worker nodes supported is 16.

Provision at least one extra worker node to preserve uptime in the event a node becomes unavailable (fault-tolerance) or during maintenance operations that require restarts, such as OS patching.

This enables the safe removal of a worker node from the cluster to apply upgrades, without impacting the availability of applications.

|

Runtime Fabric can reserve up to 0.5 cores per worker node for internal services. This is important when determining the amount of CPU cores and worker nodes needed. For example, your Runtime Fabric could have three worker nodes and two CPU cores for each worker node. If Runtime Fabric reserves 0.3 cores per worker node, a total of 0.9 cores, the number of available cores displayed in Runtime Manager is 5.1 vCPU instead of 6 vCPU. |

Each worker node must have the following resources:

-

A minimum of 2 dedicated cores.

-

A minimum of 15 GiB memory.

You must consider how many Mules and tokenizers you plan to run on Runtime Fabric, and what they are licensed to deploy. Plan on approximately 0.5 cores per worker node for overhead.

-

A minimum of 80 GiB dedicated disk for the operating system.

-

A minimum of 20 GiB for

/tmpdirectory. The/tmpdirectory needs read, write, and executable permissions. -

A minimum of 8 GiB for

/opt/anypoint/runtimefabricdirectory. -

A minimum of 1 GiB for

/var/log/directory.

-

-

A minimum of 250 GiB dedicated disk with at least 1000 provisioned IOPS for Docker overlay and other internal services. This translates to a minimum of 4 MBps disk read/write performance.

Having 250 GiB ensures there is enough space to run applications, cache docker images, provide temporary storage for running applications, and provide log storage.

Additional Resource Requirements for Production Environments

When using Runtime Fabric on VMs / Bare Metal in a production environment:

-

An external load balancer must be configured to load balance external requests to the internal load balancer running on each controller VM, with a health check configured for TCP port 443. A TCP load balancer with a server pool consisting of each of the controller nodes is sufficient.

-

An internal load balancer that runs on at least 3 replicas to maintain availability.

-

Applications serving inbound requests must be scaled to a minimum of 2 replicas.

Development Configuration Requirements

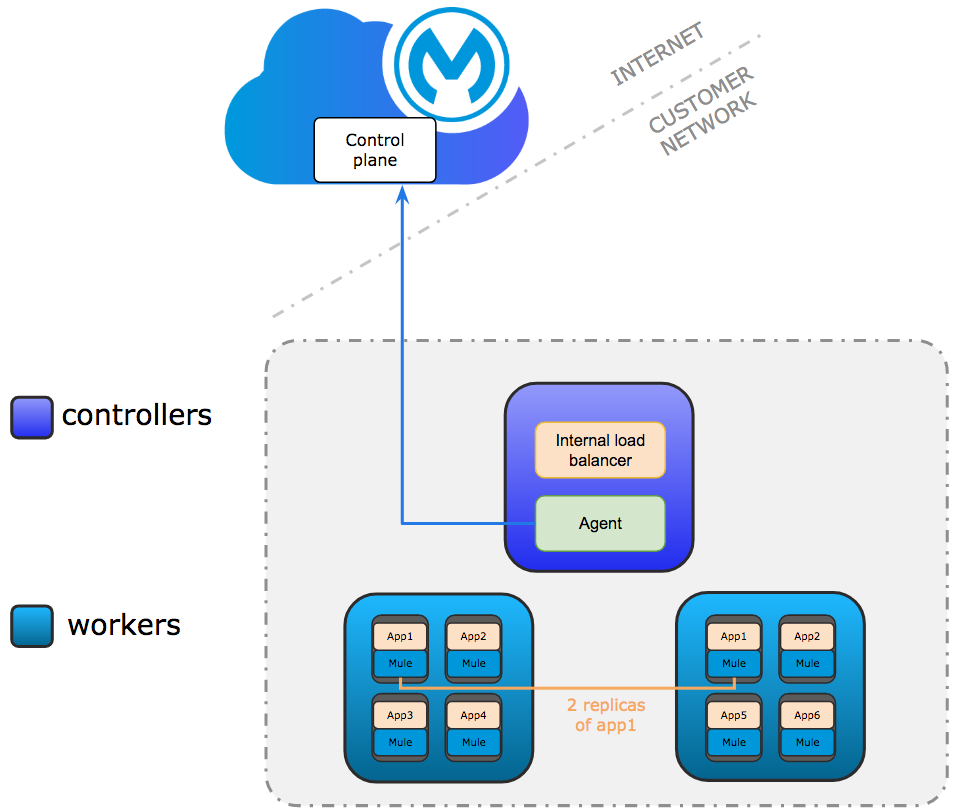

Runtime Fabric on VMs / Bare Metal requires a minimum of three nodes in a development environment as shown in the following diagram:

| Runtime Fabric on VMs / Bare Metal does not support running controller nodes using burstable VMs. When deploying an application on a worker node, you can allocate vCPU for bursting. see Resource Allocation and Performance on Anypoint Runtime Fabric for additional information. |

The following sections describe the minimum system requirements of each controller and worker nodes in a development configuration.

Controller Nodes

The minimum number of controller nodes required for a non-production environment is one. Even numbers of controllers are not supported. Keep in mind that single-controller clusters won’t be recoverable in the event of corruption of the etcd database. For example the issue described in this knowledge article.

The maximum number of supported controller nodes is 5.

Each controller node must have the following resources:

-

A minimum of 2 dedicated cores.

-

A minimum of 8 GiB memory.

-

A minimum of 80 GiB dedicated disk for the operating system.

-

A minimum of 20 GiB for

/tmpdirectory. The/tmpdirectory needs read, write, and executable permissions. -

A minimum of 8 GiB for

/opt/anypoint/runtimefabricdirectory. -

A minimum of 1 GiB for

/var/log/directory.

-

-

A minimum of 60 GiB dedicated disk with 3000 provisioned IOPS for etcd. This translates to a minimum of 12 Megabytes per second (MBps) disk read/write performance.

-

A minimum of 100 GiB dedicated disk with 1000 provisioned IOPS for Docker overlay and other internal services. This translates to a minimum of 4 MBps disk read/write performance.

Worker Nodes

Runtime Fabric on VMs / Bare Metal requires at least two worker nodes to run Mule applications and API gateways. The maximum number of workers nodes supported is 16.

Provision at least one extra worker node to preserve uptime in the event a node becomes unavailable (fault-tolerance) or during maintenance operations that require restarts, such as OS patching.

This enables safe removal of a worker node from the cluster to apply upgrades, without impacting availability of applications.

|

Runtime Fabric can reserve up to 0.5 cores per worker node for internal services. This is important when determining the amount of CPU cores and worker nodes needed. For example, your Runtime Fabric could have three worker nodes and two CPU cores for each worker node. If Runtime Fabric reserves 0.3 cores per worker node, a total of 0.9 cores, the number of available cores displayed in Runtime Manager is 5.1 vCPU instead of 6 vCPU. |

Each worker node must have the following resources:

-

A minimum of 2 dedicated cores.

-

A minimum of 15 GiB memory.

-

A minimum of 80 GiB dedicated disk for the operating system.

-

A minimum of 20 GiB for

/tmpdirectory. The/tmpdirectory needs read, write, and executable permissions. -

A minimum of 8 GiB for

/opt/anypoint/runtimefabricdirectory. -

A minimum of 1 GiB for

/var/log/directory.

-

-

A minimum of 100 GiB dedicated disk with at least 1000 provisioned IOPS for Docker overlay and other internal services. This translates to a minimum of 4 MBps disk read/write performance.

Having 100 GiB ensures there is enough space to run applications, cache docker images, provide temporary storage for running applications, and provide log storage.